|

Next webinar:

Apr 22, 2026 -

|

|

|

Dear Reader,

Welcome to the US Tax Day edition of our newsletter!

Announcements

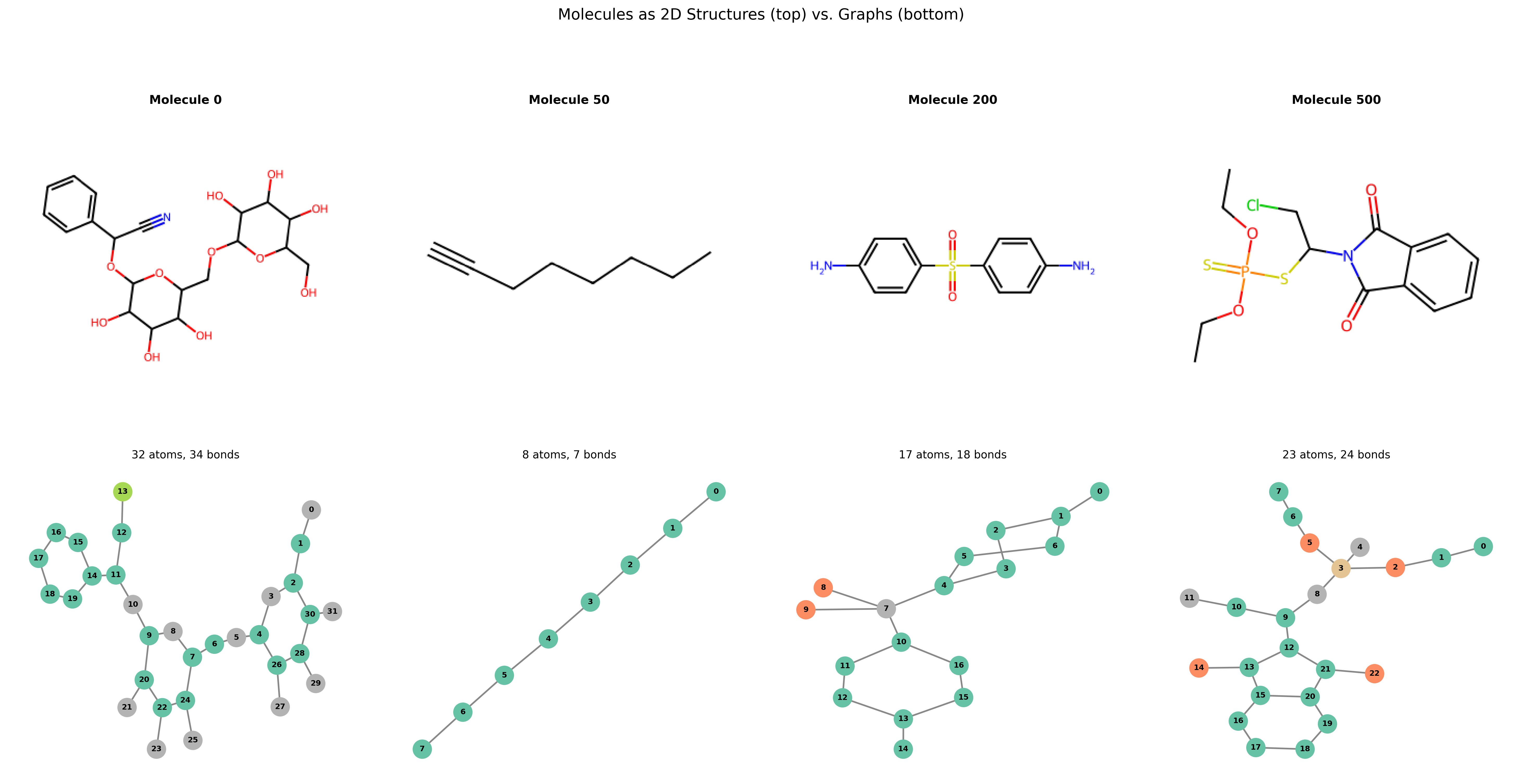

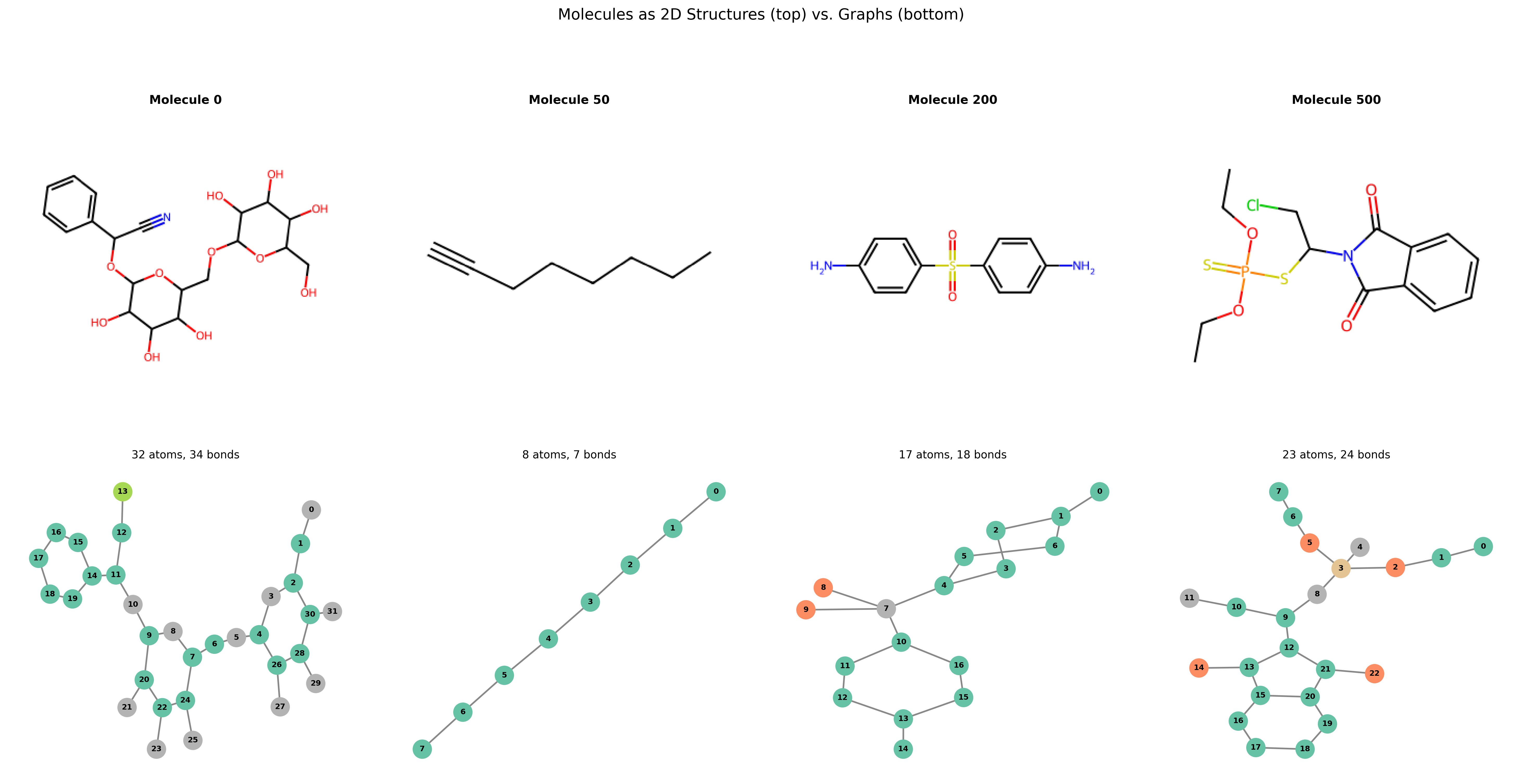

Graph Neural Networks are transforming how we model everything from social networks to drug discovery. Whether you're looking for a refresher or a starting point, the introductory guide in our latest blog post over on Substack is a perfect place to begin:

Check it out and Subscribe so you don't miss another post.

The current wave of AI progress is starting to look less like a story of raw model size and more like a story of systems: routines that turn repeated tasks into dependable workflows, tool use that lets models reach beyond the prompt, and a growing realization that real-world performance depends as much on orchestration as on intelligence itself.

This shift runs through this week’s reading, from the rise of practical automation and local coding assistants to sharper debates over how agents should call tools without collapsing into integration chaos. At the same time, the latest industry snapshot makes clear that investment, deployment, and capability gains are accelerating, even as compute partnerships and infrastructure deals reveal just how expensive this new era has become. The future of AI will be shaped not just by smarter models but by better interfaces, better engineering, and a much clearer understanding of where the real bottlenecks now lie.

On the academic front, this week's papers sketch a more complicated picture of AI progress than the usual triumphalist narrative: the field is advancing quickly, but not always on foundations sturdy enough to trust.

On one side are genuinely exciting signs of scientific reach, from hybrid deep learning approaches that map protein interactions at scale to emerging visions of “neural computers” and agent architectures that expand model capability through memory, tools, and externalized skills rather than sheer parameter count alone.

On the other is a sobering reminder that capability without rigor can mislead just as easily as it can illuminate: disease-prediction systems trained on shaky data, writing assistants that subtly nudge users’ social and political attitudes, and even safety interventions that may introduce new forms of harm all point to the same core lesson. As AI becomes more embedded in science, medicine, and everyday reasoning, the real challenge is no longer just making systems more powerful but ensuring they are grounded, transparent, and resilient in the messy environments where their outputs actually matter.

Our current book recommendation is "Designing Data-Intensive Applications" by M. Kleppmann and C. Riccomini. You can find all the previous book reviews on our website. In this week's video, we have an overview of AI in Videogames.

Data shows that the best way for a newsletter to grow is by word of mouth, so if you think one of your friends or colleagues would enjoy this newsletter, go ahead and forward this email to them. This will help us spread the word!

Semper discentes,

The D4S Team

"Designing Data-Intensive Applications" by M. Kleppmann and C. Riccomini is the kind of book that quietly raises the level of everyone who reads it. In this new edition, the authors do an outstanding job of explaining the core ideas behind modern data systems, like replication, consistency, storage, streaming, fault tolerance, and scalability, without reducing them to buzzwords or vendor-specific recipes. That makes the book especially valuable for data scientists and machine learning engineers as it bridges the gap between building models and understanding the data infrastructure those models depend on in production.

What makes the book so compelling is its focus on first principles. Rather than teaching a single stack or a fleeting set of tools, it gives readers a durable framework for thinking about trade-offs in real systems. That is incredibly useful for ML engineers working on pipelines, model serving, retrieval systems, or any workflow where reliability and performance matter as much as model quality. The downside is that it is more conceptual than hands-on, and readers looking for quick code examples or direct coverage of topics like feature stores, vector databases, or modern LLM infrastructure may wish it connected the dots more explicitly.

Still, that broader systems lens is exactly why the book stands out. It is thoughtful, clear, and deeply practical in the ways that matter over the long run. For anyone in data science or machine learning who wants to understand not just how to build models, but how to build the systems that let those models survive contact with reality, this is an easy book to recommend.

- Automate work with routines [code.claude.com]

- Stanford Artificial Intelligence Index Report 2026 [hai.stanford.edu]

- The Mythical Agent-Month [wesmckinney.com]

- How We Broke Top AI Agent Benchmarks: And What Comes Next [rdi.berkeley.edu]

- Anthropic Will Use CoreWeave’s AI Capacity to Power Claude [bloomberg.com]

-

Tool calling, open source, and the M×N problem [thetypicalset.com]

- I ran Gemma 4 as a local model in Codex CLI [medium.com/google-cloud]

- Dozens of AI disease-prediction models were trained on dubious data (M. Basu)

-

Combining structural modeling and deep learning to calculate the E. coli protein interactome and functional networks (H. Zhao, C. Velez, A. Naravane, A. Saha, J. Feldman, J. Skolnick, D. Murray, B. Honig)

-

Nonlinear effects of noise on outbreaks of mosquito-borne diseases (K. J.-M. Dahlin, K. Ebey, J. E. Vinson, J. M. Drake)

- Biased AI writing assistants shift users’ attitudes on societal issues (S. Williams-Ceci, M. Jakesch, A. Bhat, K. Kadoma, L. Zalmanson, M. Naaman)

-

IatroBench: Pre-Registered Evidence of Iatrogenic Harm from AI Safety Measures (D. Gringras)

- Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering (C. Zhou, H. Chai, W. Chen, Z. Guo, R. Shan, Y. Song, T. Xu, Y. Yang, A. Yu, W. Zhang, C. Zheng, J. Zhu, Z. Zheng, Z. Zhang, X. Lou, C. Zhang, Z. Fu, J. Wang, W. Liu, J. Lin, W. Zhang)

- Neural Computers (M. Zhuge, C. Zhao, H. Liu, Z. Zhou, S. Liu, W. Wang, E. Chang, G. L. Lan, J. Fei, W. Zhang, Y. Sun, Z. Cai, Z. Liu, Y. Xiong, Y. Yang, Y. Tian, Y. Shi, V. Chandra, J. Schmidhuber)

AI and Videogames

All the videos of the week are now available in our YouTube playlist.

Upcoming Events:

Opportunities to learn from us

On-Demand Videos:

Long-form tutorials

- Natural Language Processing 7h, covering basic and advanced techniques using NTLK and PyTorch.

- Python Data Visualization 7h, covering basic and advanced visualization with matplotlib, ipywidgets, seaborn, plotly, and bokeh.

- Times Series Analysis for Everyone 6h, covering data pre-processing, visualization, ARIMA, ARCH, and Deep Learning models.

|

|

|

|