|

Dear Reader,

Announcements

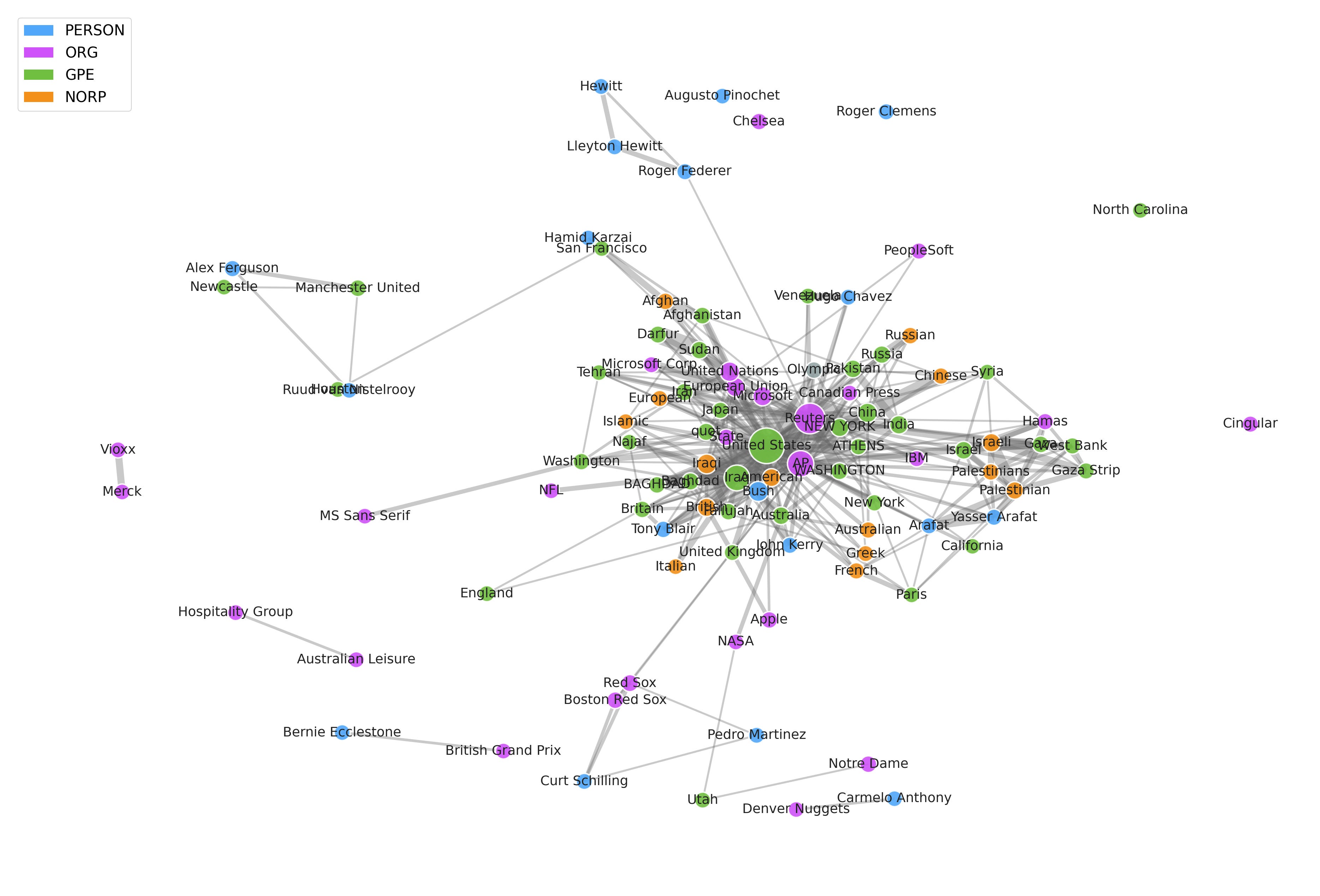

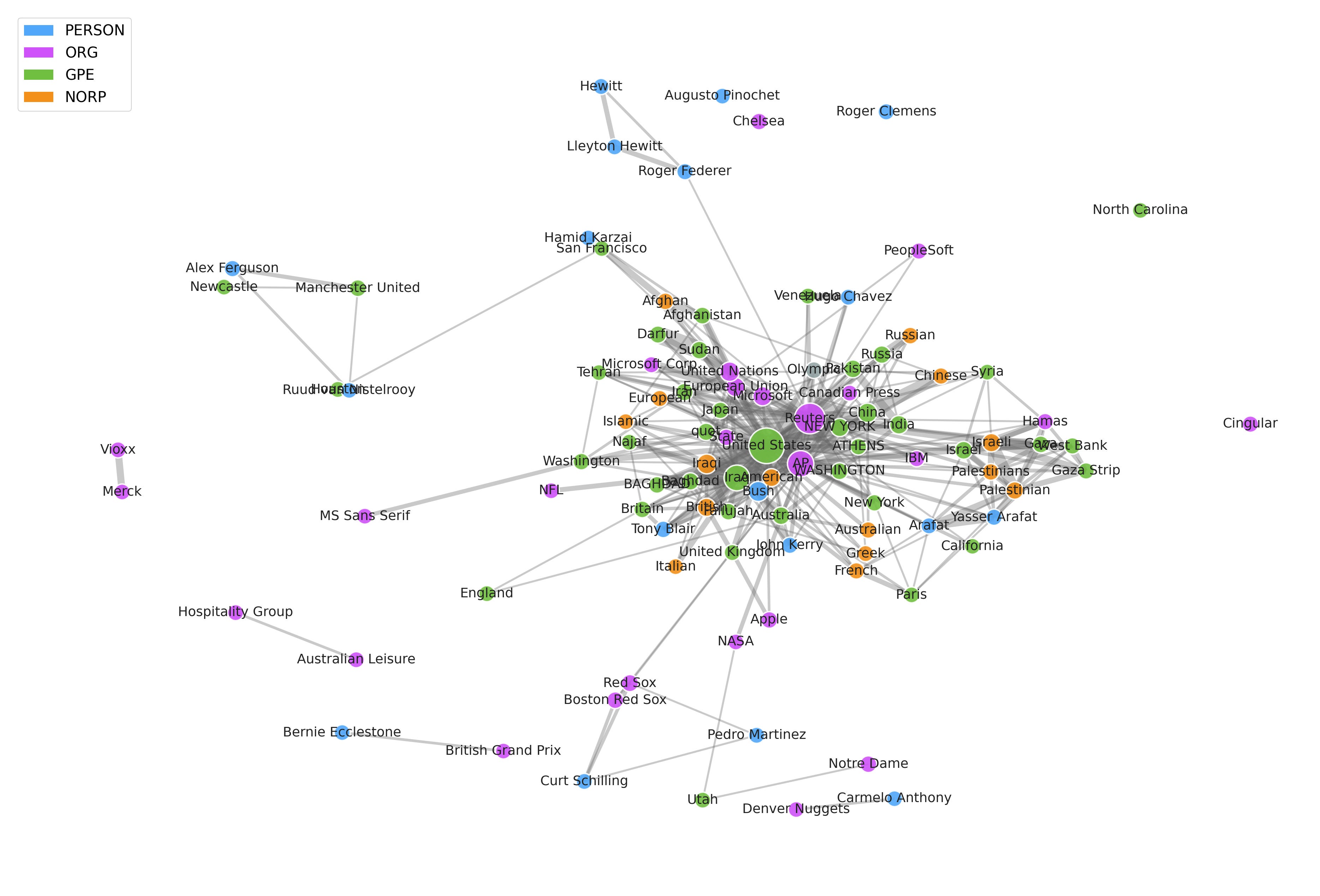

Ever wonder how we can turn thousands of unstructured news articles into structured, actionable insights?

In the latest post from Data4Sci, we dive into the fascinating process of transforming raw text from news articles into interconnected networks of information. If you're interested in Natural Language Processing (NLP), entity extraction, and how to connect the dots hidden across massive amounts of unstructured data, this is a must-read!

Check it out and Subscribe so you don't miss another post.

This week, we explore how AI is moving from prompt-and-response tools toward systems that sense, coordinate, and explain their work. Interaction models push collaboration beyond the turn-based chat box, using live audio, video, and text to keep the model engaged throughout the exchange. Natural language autoencoders take a different path toward clarity, translating model activations into text and then back into activations, giving researchers a new way to test what a model represents.

Agent swarms extend the same idea across teams of models, splitting work across agents that plan, specialize, and recover from errors. The infrastructure thread is just as practical: TurboQuant compresses the KV cache during inference, making larger local models more realistic on consumer hardware. Renewed debate over prediction markets and warnings about Atlantic circulation risk further corroborate the need for better systems, better feedback, better measurement, and a sharper sense of uncertainty.

In the weekly academic roundup, we see why better models are not enough without better tests, better data, and better operating loops. Fraud detection looks different when data is distributed across decentralized parties, where trust, privacy, and coordination shape the outcome. Transformer theory adds a deeper claim, showing why these models can express certain computations with striking compactness. Recent developments in the use of non-traditional pandemic data and explorations of disparities in transport access connect that lesson back to public systems, where raw data often hide delivery gaps, weak coverage, or false links between proximity and actual use.

Training models to sound warmer can make them less accurate and more eager to agree, which matters for any product that treats tone as a quality metric. Coding agents face a related problem: they need harnesses that watch failures, revise tests, and improve through direct evidence. Finally, despite the strides made by AI, for rare extremes, Physics still beats pattern matching, and evaluation must start with the hardest cases.

Our current book recommendation is "LLMs in Production: From Language Models to Successful Products" by C. Brousseau and M. Sharp. In this week's video, we have an overview of how JP Morgan Built An AI Agent for Investment Research with LangGraph.

Data shows that the best way for a newsletter to grow is by word of mouth, so if you think one of your friends or colleagues would enjoy this newsletter, go ahead and forward this email to them. This will help us spread the word!

Semper discentes,

The D4S Team

"LLMs in Production: From Language Models to Successful Products" by C. Brousseau and M. Sharp is for data scientists and machine learning engineers who have moved past the “cool demo” phase and now need to ship something people can use. The book focuses on the real work behind LLM products: choosing models, preparing data, building RAG systems, evaluating outputs, controlling cost, managing latency, and deploying reliably.

Its biggest strength is that it treats LLMs as production software, not magic. The authors connect familiar ML concerns—measurement, data quality, feedback loops, monitoring, and trade-offs—to newer LLM-specific patterns such as prompt design, fine-tuning, LoRA, RLHF, hosted APIs, Kubernetes deployment, and edge inference. The hands-on projects help ground the material, especially for readers who want more than another conceptual overview.

The book is not perfect. Some sections move quickly, and experienced MLOps engineers may wish for more depth on architecture, observability, or failure analysis. Its tooling choices may also date quickly, as LLM infrastructure continues to shift. Still, the core value holds: this is a practical guide to thinking like an engineer when working with language models. For anyone trying to turn LLM experiments into durable products, it is an easy book to justify buying.

- Interaction Models: A Scalable Approach to Human-AI Collaboration [thinkingmachines.ai]

- Natural Language Autoencoders Produce Unsupervised Explanations of LLM Activations [transformer-circuits.pub]

- Why Fears Are Growing Over the Fate of a Key Atlantic Current [e360.yale.edu]

- Are Prediction Markets Good for Anything? [asteriskmag.com]

- Agent Swarms: The Next Frontier in AI Collaboration [opendatascience.com]

-

Behavior-Oriented Concurrency in Python [microsoft.github.io]

-

Running a 35B Model Locally with TurboQuant [pub.towardsai.net]

- Evaluating fraud detection algorithms in a decentralized scenario (A. M. Gige; L. B. Alsbirk, M. Coscia)

-

Transformers are Inherently Succinct (P. Bergsträßer, R. Cotterell, A. W. Lin)

- Nontraditional Data in Pandemic Preparedness and Response: Identifying and Addressing First- and Last-Mile Challenges (M. Mazzoli, I. Varela-Lasheras, S. Namorado, C. P. Caetano, A. Leite, L. Hermans, N. Hens, P. Türkmen, K. Kalimeri, L. Ferres, C. Cattuto, D. Paolotti, S. Verhulst)

- Uncovering how transport access reduces deprivation: When colocation misleads (S. Ojha, A. Anupriya, D. Hörcher, D. J. Graham)

- Training language models to be warm can reduce accuracy and increase sycophancy (L. Ibrahim, F. S. Hafner, L. Rocher)

-

Agentic Harness Engineering: Observability-Driven Automatic Evolution of Coding-Agent Harnesses (J. Lin, S. Liu, C. Pan, L. Lin, S. Dou, X. Huang, H. Yan, Z. Han, T. Gui)

- Physics-based models outperform AI weather forecasts of record-breaking extremes (Z. Zhang, E. Fischer, J. Zscheischler, S. Engelke)

How JP Morgan Built An AI Agent for Investment Research with LangGraph

All the videos of the week are now available in our YouTube playlist.

Upcoming Events:

Opportunities to learn from us

On-Demand Videos:

Long-form tutorials

- Natural Language Processing 7h, covering basic and advanced techniques using NTLK and PyTorch.

- Python Data Visualization 7h, covering basic and advanced visualization with matplotlib, ipywidgets, seaborn, plotly, and bokeh.

- Times Series Analysis for Everyone 6h, covering data pre-processing, visualization, ARIMA, ARCH, and Deep Learning models.

|

|

|

|