|

Dear Reader,

Announcements

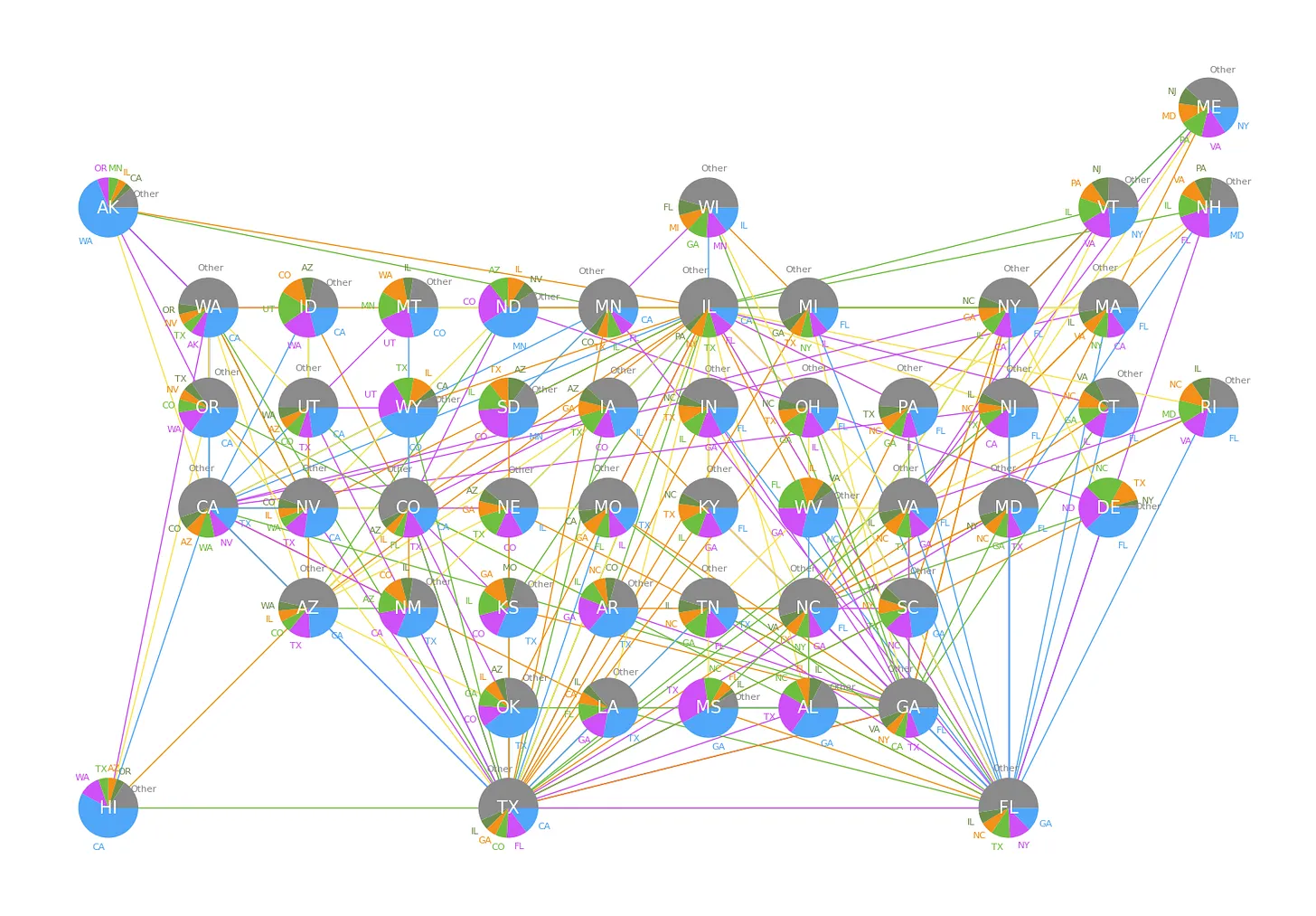

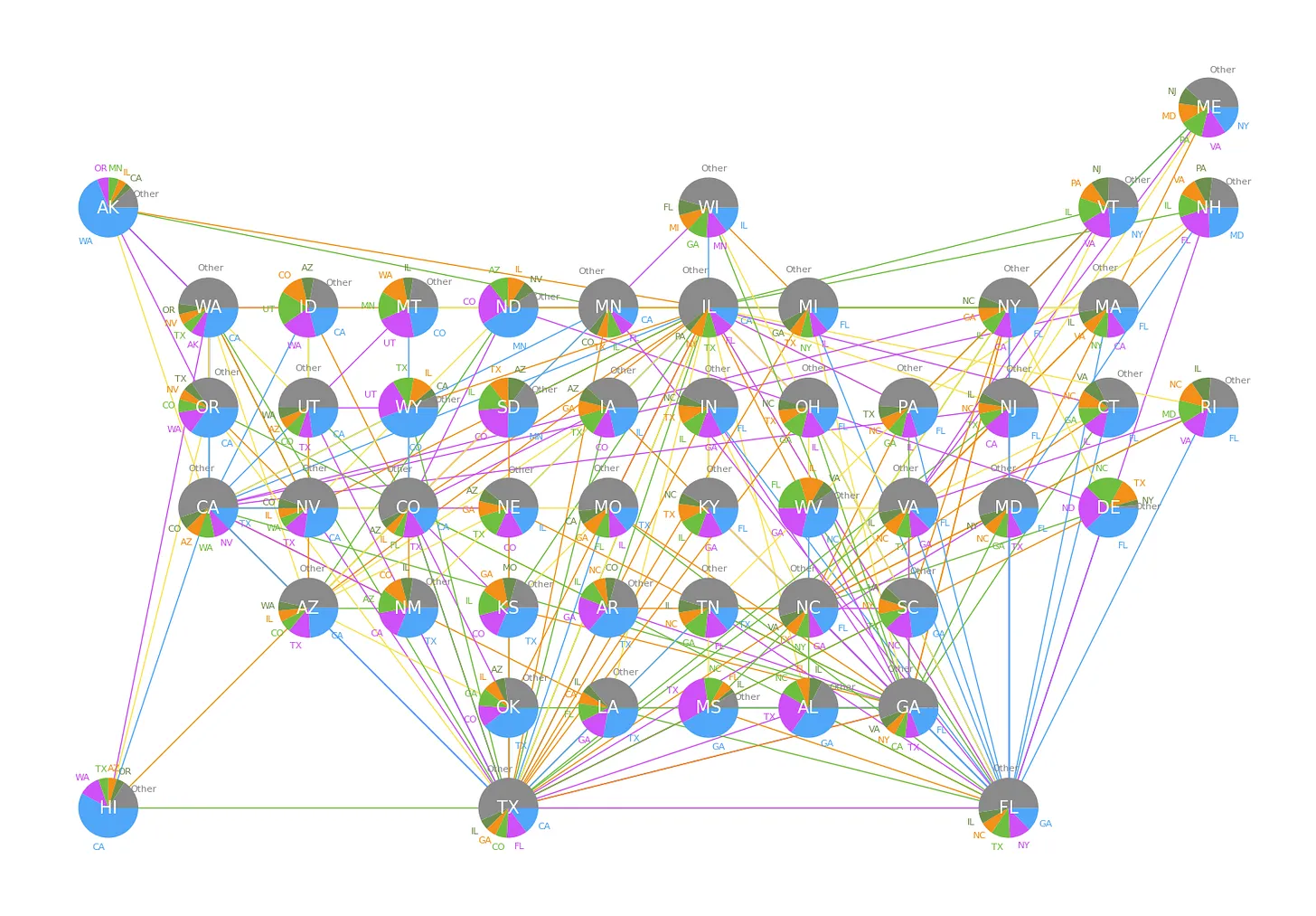

✈️ Mapping the skies: How do we visualize airline traffic between states?

We often think of air travel in terms of airports, but viewing it as a network of state-to-state connections reveals fascinating patterns in how our country moves.

Our latest substack uses data visualization to turn raw statistics into a clear story about infrastructure and mobility.

Check it out and Subscribe so you don't miss another post.

AI is getting more serious, more useful, and harder to contain in tidy categories. Europe’s most visible AI challenger shows that frontier-model ambition no longer has to be routed through Silicon Valley, even as the warnings around existential risk keep growing louder and more contested. On the practical side, the center of gravity is shifting from demos to deployment: synthetic data is being framed less as a shortcut and more as a design problem, while new agent tooling is making it easier to connect models to real production systems, workflows, and command-line interfaces.

The same pattern shows up in weather forecasting, where AI systems are beginning to outperform earlier approaches on speed and accuracy, and even in biology, where a surprising discovery about DNA production is a reminder that “intelligence” is not the only frontier being rewritten.

This week’s research round-up circles a deceptively simple question: how do complex systems learn, coordinate, and converge? Prediction markets suggest that “crowd wisdom” may often depend less on the average participant than on a small informed minority, while studies of social conventions and cooperation show how groups repeatedly settle into shared behaviors, break them, and repair them over time. Deep learning is undergoing a similar shift from empirical craft to scientific object: new work argues that the field is inching toward a real theory of why neural networks work, while evidence that different language models learn similar number representations hints at hidden regularities beneath their surface differences.

On the engineering side, scalable second-order optimization points toward faster, more efficient model pretraining, and multilayer network science offers a broader language for understanding systems in which many kinds of relationships overlap.

After all is said and done, intelligence might turn out to be less of a single breakthrough and more of an emergent property of interaction, structure, and repeated adaptation.

Our current book recommendation is "Designing Data-Intensive Applications" by M. Kleppmann and C. Riccomini. You can find all the previous book reviews on our website. In this week's video, we have a lecture titled Building Large Language Models (LLMs) from Stanford University.

Data shows that the best way for a newsletter to grow is by word of mouth, so if you think one of your friends or colleagues would enjoy this newsletter, go ahead and forward this email to them. This will help us spread the word!

Semper discentes,

The D4S Team

"Designing Data-Intensive Applications" by M. Kleppmann and C. Riccomini is the kind of book that quietly raises the level of everyone who reads it. In this new edition, the authors do an outstanding job of explaining the core ideas behind modern data systems, like replication, consistency, storage, streaming, fault tolerance, and scalability, without reducing them to buzzwords or vendor-specific recipes. That makes the book especially valuable for data scientists and machine learning engineers as it bridges the gap between building models and understanding the data infrastructure those models depend on in production.

What makes the book so compelling is its focus on first principles. Rather than teaching a single stack or a fleeting set of tools, it gives readers a durable framework for thinking about trade-offs in real systems. That is incredibly useful for ML engineers working on pipelines, model serving, retrieval systems, or any workflow where reliability and performance matter as much as model quality. The downside is that it is more conceptual than hands-on, and readers looking for quick code examples or direct coverage of topics like feature stores, vector databases, or modern LLM infrastructure may wish it connected the dots more explicitly.

Still, that broader systems lens is exactly why the book stands out. It is thoughtful, clear, and deeply practical in the ways that matter over the long run. For anyone in data science or machine learning who wants to understand not just how to build models, but how to build the systems that let those models survive contact with reality, this is an easy book to recommend.

- How France’s Mistral Built A $14 Billion AI Empire By Not Being American [forbes.com]

- AI doom warnings are getting louder. Are they realistic? [www.nature.com]

- Designing synthetic datasets for the real world: Mechanism design and reasoning from first principles [research.google]

- The Ultimate Guide to Claude Opus 4.7 [productcompass.pm]

- Building agents that reach production systems with MCP [claude.com]

- Agents CLI in Agent Platform: create to production in one CLI [developers.googleblog.com]

- Google WeatherNext 2 — Our most accurate AI weather forecasting technology [deepmind.google]

- Scientists stunned by ‘fundamentally new way’ life produces DNA [www.science.org]

-

Prediction Market Accuracy: Crowd Wisdom or Informed Minority? (R. G. Cram, Y. Guo, T. I. Jensen, H. Kung)

-

There Will Be a Scientific Theory of Deep Learning (J. Simon, D. Kunin, A. Atanasov, E. Boix-Adserà, B. Bordelon, J. Cohen, N. Ghosh, F. Guth, A. Jacot, M. Kamb, D. Karkada, E. J. Michaud, B. Ottlik, J. Turnbull)

-

Convergent Evolution: How Different Language Models Learn Similar Number Representations (D. Fu, T. Zhou, M. Belkin, V. Sharan, R. Jia)

- Multilayer network science: theory, methods, and applications (A. Aleta, A. S. Teixeira, G. Ferraz de Arruda, A. Baronchelli, A. Barrat, J. Kertész, A. Díaz-Guilera, O. Artime , M. Starnini , G. Petri , M. Karsai, S. Patwardhan , K. Coronges, A. McCranie, A. Vespignani, Y. Moreno, S. Fortunato)

-

Sophia: A Scalable Stochastic Second-order Optimizer for Language Model Pre-training (H. Liu, Z. Li, D. Hall, P. Liang, T. Ma)

- A simple threshold captures the social learning of conventions (D. Guilbeault, S. Caplan, C. Yang)

- Human cooperation undergoes constant breakdown and repair (J. Wu)

Building Large Language Models (LLMs)

All the videos of the week are now available in our YouTube playlist.

Upcoming Events:

Opportunities to learn from us

On-Demand Videos:

Long-form tutorials

- Natural Language Processing 7h, covering basic and advanced techniques using NTLK and PyTorch.

- Python Data Visualization 7h, covering basic and advanced visualization with matplotlib, ipywidgets, seaborn, plotly, and bokeh.

- Times Series Analysis for Everyone 6h, covering data pre-processing, visualization, ARIMA, ARCH, and Deep Learning models.

|

|

|

|