|

Dear Reader,

Announcements

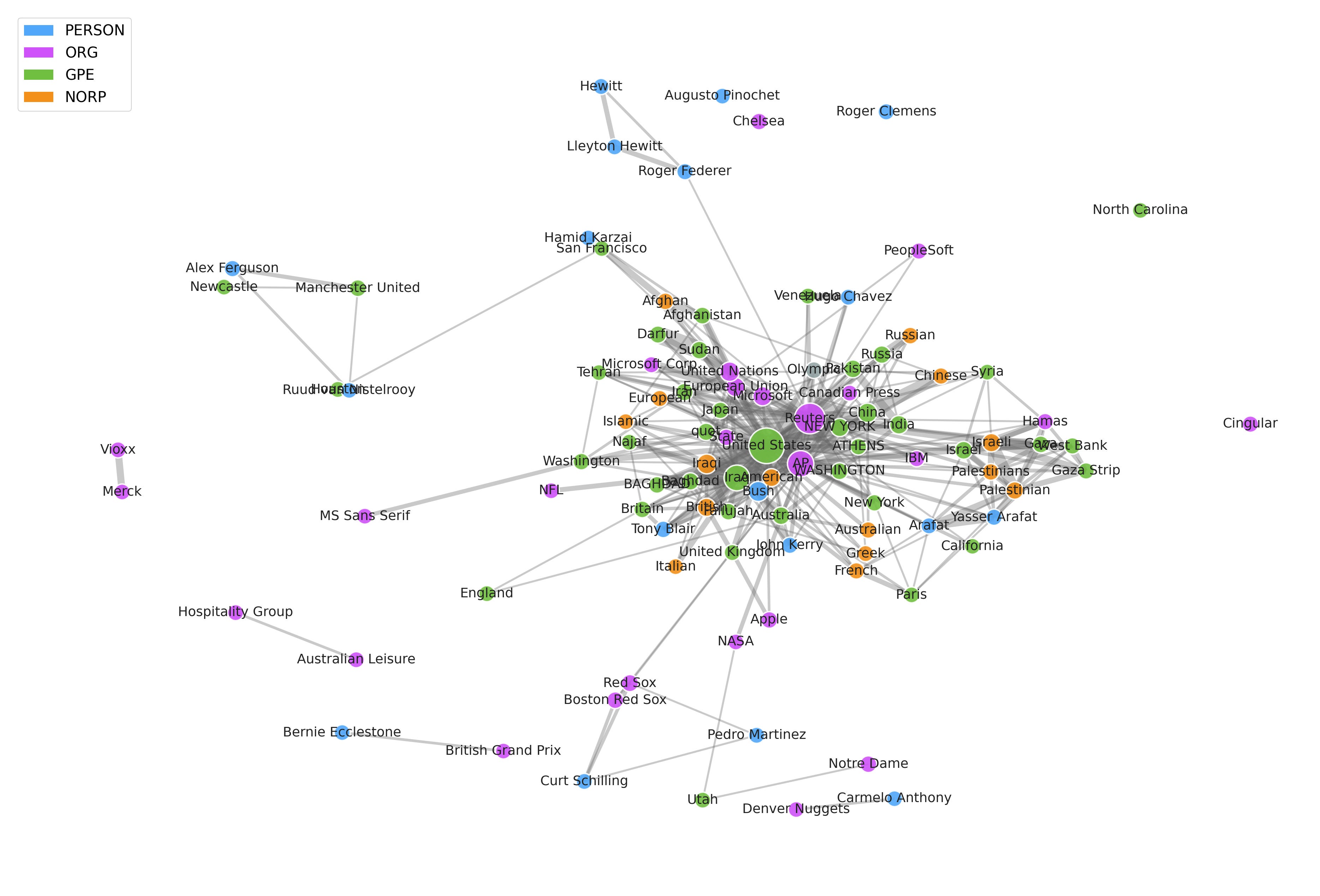

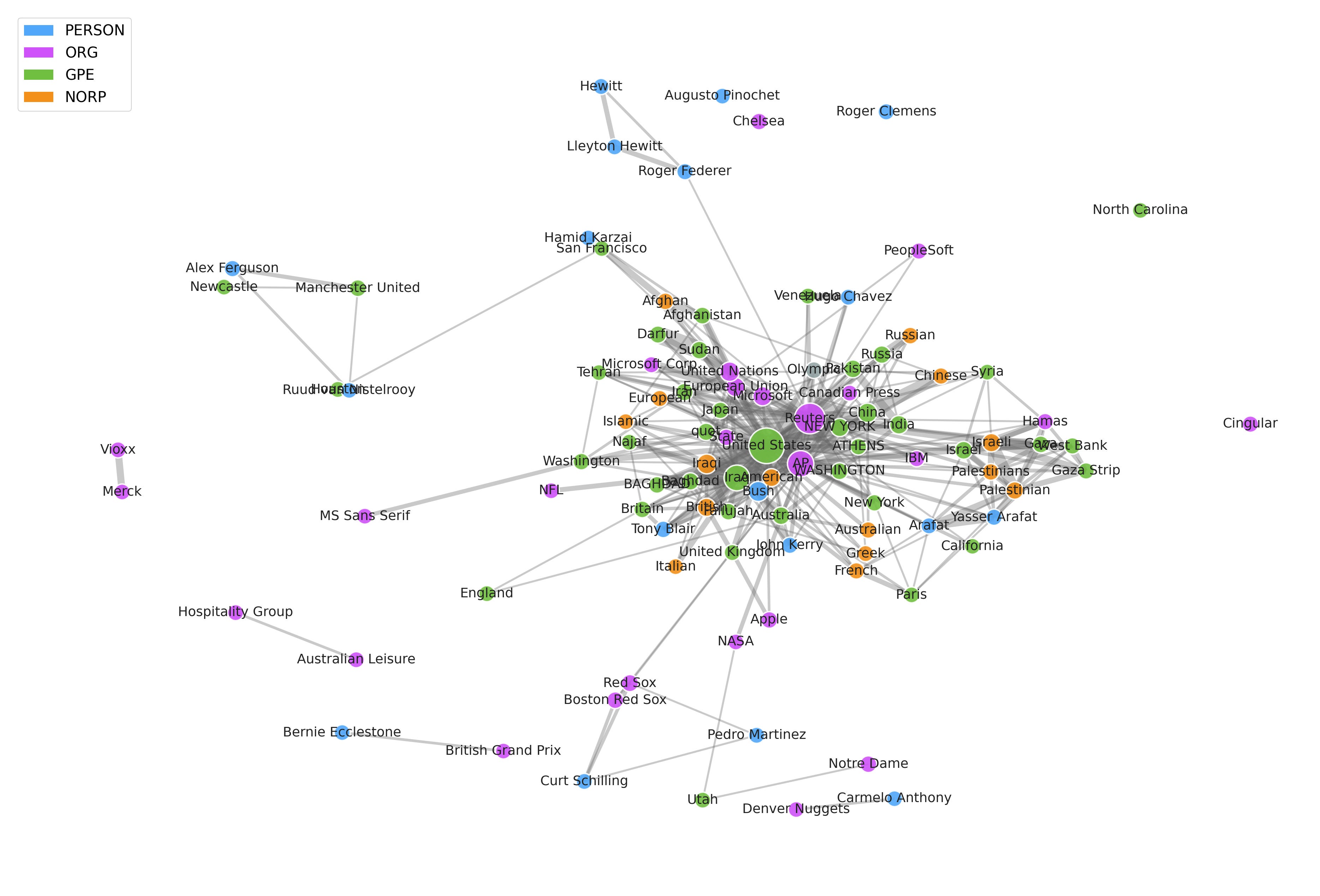

Ever wonder how we can turn thousands of unstructured news articles into structured, actionable insights?

In the latest post from Data4Sci, we dive into the fascinating process of transforming raw text from news articles into interconnected networks of information. If you're interested in Natural Language Processing (NLP), entity extraction, and how to connect the dots hidden across massive amounts of unstructured data, this is a must-read!

Check it out and Subscribe so you don't miss another post.

This week's thread is that agents are moving from toy demos to production systems, and the hard parts are now tests, memory, search, safety, and money. Cheap code changes the job: build fast, rebuild often, write end-to-end tests, keep specs current, and trust taste more than prompt tricks.

The same shift shows up in infrastructure, where the agent loop belongs outside the sandbox so credentials stay protected, idle compute can sleep, failed sandboxes can be replaced, and shared memory can live in a database instead of a fragile local file. Search is turning into a core agent tool too, with builders weighing indexes, scrapers, model-native search, and real-time crawlers against cost, latency, and reliability. The darker side is just as concrete: biosecurity tests found chatbots giving dangerous lab and release guidance, and ad tracking appears to be entering chat through structured units and merchant-side signals. So the issue is whether teams can make LLMs useful without making them careless, leaky, or unsafe.

In our academic roundup, one paper treats factual recall as a rough scale for hidden model size, turning trivia into a pressure gauge for black-box systems. Another asks whether agents need a conductor, not just more tools, so that natural language can coordinate roles, checks, and handoffs. The document work adds a sharper warning: delegation can quietly damage files, not just draft weak text. The representation papers point in a strange direction. Language models, vision models, and image generators seem to learn shared internal structure, as if very different systems are converging on the same map of the world. The main thread is control: how to measure these systems, steer them, test them, and decide where their hidden structure helps or hurts.

Our current book recommendation is "LLMs in Production: From Language Models to Successful Products" by C. Brousseau and M. Sharp. In this week's video, we have an interview with Andrej Karpathy on how we can move From Vibe Coding to Agentic Engineering.

Data shows that the best way for a newsletter to grow is by word of mouth, so if you think one of your friends or colleagues would enjoy this newsletter, go ahead and forward this email to them. This will help us spread the word!

Semper discentes,

The D4S Team

"LLMs in Production: From Language Models to Successful Products" by C. Brousseau and M. Sharp is for data scientists and machine learning engineers who have moved past the “cool demo” phase and now need to ship something people can use. The book focuses on the real work behind LLM products: choosing models, preparing data, building RAG systems, evaluating outputs, controlling cost, managing latency, and deploying reliably.

Its biggest strength is that it treats LLMs as production software, not magic. The authors connect familiar ML concerns—measurement, data quality, feedback loops, monitoring, and trade-offs—to newer LLM-specific patterns such as prompt design, fine-tuning, LoRA, RLHF, hosted APIs, Kubernetes deployment, and edge inference. The hands-on projects help ground the material, especially for readers who want more than another conceptual overview.

The book is not perfect. Some sections move quickly, and experienced MLOps engineers may wish for more depth on architecture, observability, or failure analysis. Its tooling choices may also date quickly, as LLM infrastructure continues to shift. Still, the core value holds: this is a practical guide to thinking like an engineer when working with language models. For anyone trying to turn LLM experiments into durable products, it is an easy book to justify buying.

- 10 Lessons for Agentic Coding [dbreunig.com]

- Let’s talk about LLMs [b-list.org]

- Maybe AI Isn't a Bubble After All [theatlantic.com]

- The Agent Harness Belongs Outside the Sandbox [mendral.com]

- A.I. Bots Told Scientists How to Make Biological Weapons [nytimes.com]

- Web Search for Agents in 2026 [michaellivs.com]

- How ChatGPT serves ads. Here's the full attribution loop. [buchodi.com]

-

Incompressible Knowledge Probes: Estimating Black-Box LLM Parameter Counts via Factual Capacity (B. Li)

-

Learning to Orchestrate Agents in Natural Language with the Conductor (S. Nielsen, E. Cetin, P. Schwendeman, Q. Sun, J. Xu, Y. Tang)

- How to conduct behavioural experiments online (M. Warburton, J. S. Tsay)

-

A Model of the Language Process (B. Duderstadt, H. Helm)

-

The Platonic Representation Hypothesis (M. Huh, B. Cheung, T. Wang, P. Isola)

-

Image Generators are Generalist Vision Learners (V. Gabeur, S. Long, S. Peng, P. Voigtlaender, S. Sun, Y. Bao, K. Truong, Z. Wang, W. Zhou, J. T. Barron, K. Genova, N. Kannen, S. Ben, Y. Li, M. Guo, S. Yogin, Y. Gu, H. Chen, O. Wang, S. Xie, H. Zhou, K. He, T. Funkhouser, J.-B. Alayrac, R. Soricut)

-

Statistical structure and the evolution of languages (X. Guo, S. Verstyuk, H. Chen, B. Zhou, S. Skiena)

-

LLMs Corrupt Your Documents When You Delegate (P. Laban, T. Schnabel, J. Neville)

-

Car Dependency in Urban Accessibility (B. Campanelli, F. Marzolla, M. Bruno, H. P. M. Melo, V. Loreto)

- Evolution Strategies at the Hyperscale (B. Sarkar, M. Fellows, J. A. Duque, A. Letcher, . L. Villares, A. Sims, C. Wibault, D. Samsonov, D. Cope, J. Liesen, K. Li, L. Seier, T. Wolf, U. Berdica, V. Mohl, A. D. Goldie, A. Courville, K. Sevegnani, S. Whiteson, J. N. Foerster)

From Vibe Coding to Agentic Engineering

All the videos of the week are now available in our YouTube playlist.

Upcoming Events:

Opportunities to learn from us

On-Demand Videos:

Long-form tutorials

- Natural Language Processing 7h, covering basic and advanced techniques using NTLK and PyTorch.

- Python Data Visualization 7h, covering basic and advanced visualization with matplotlib, ipywidgets, seaborn, plotly, and bokeh.

- Times Series Analysis for Everyone 6h, covering data pre-processing, visualization, ARIMA, ARCH, and Deep Learning models.

|

|

|